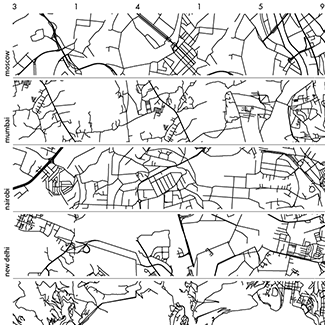

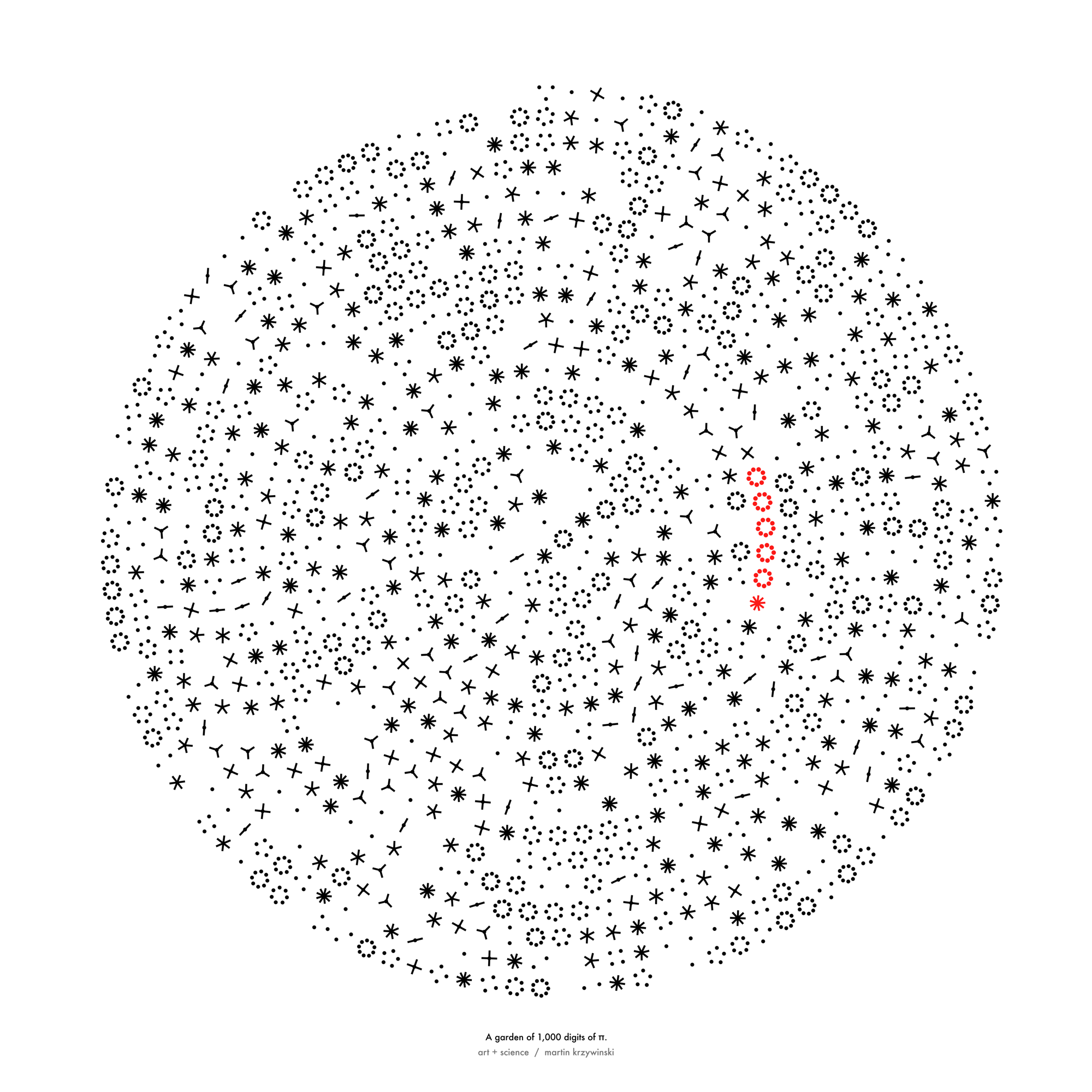

`\pi` Approximation Day Art Posters

The never-repeating digits of `\pi` can be approximated by 22/7 = 3.142857 to within 0.04%. These pages artistically and mathematically explore rational approximations to `\pi`. This 22/7 ratio is celebrated each year on July 22nd. If you like hand waving or back-of-envelope mathematics, this day is for you: `\pi` approximation day!

The `22/7` approximation of `\pi` is more accurate than using the first three digits `3.14`. In light of this, it is curious to point out that `\pi` Approximation Day depicts `\pi` 20% more accurately than the official `\pi` Day! The approximation is accurate within 0.04% while 3.14 is accurate to 0.05%.

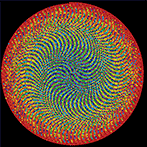

first 10,000 approximations to `\pi`

For each `m=1...10000` I found `n` such that `m/n` was the best approximation of `\pi`. You can download the entire list, which looks like this

m n m/n relative_error best_seen?

1 1 1.000000000000 0.681690113816 improved

2 1 2.000000000000 0.363380227632 improved

3 1 3.000000000000 0.045070341449 improved

4 1 4.000000000000 0.273239544735

5 2 2.500000000000 0.204225284541

7 2 3.500000000000 0.114084601643

8 3 2.666666666667 0.151173636843

9 4 2.250000000000 0.283802756086

10 3 3.333333333333 0.061032953946

11 4 2.750000000000 0.124647812995

12 5 2.400000000000 0.236056273159

13 4 3.250000000000 0.034507130097 improved

14 5 2.800000000000 0.108732318685

16 5 3.200000000000 0.018591635788 improved

17 5 3.400000000000 0.082253613025

18 5 3.600000000000 0.145915590262

19 6 3.166666666667 0.007981306249 improved

20 7 2.857142857143 0.090543182332

21 8 2.625000000000 0.164436548768

22 7 3.142857142857 0.000402499435 improved

23 7 3.285714285714 0.045875340318

24 7 3.428571428571 0.091348181202

...

354 113 3.132743362832 0.002816816734

355 113 3.141592920354 0.000000084914 improved

356 113 3.150442477876 0.002816986561

...

9998 3183 3.141061891298 0.000168946885

9999 3182 3.142363293526 0.000245302310

10000 3183 3.141690229343 0.000031059327

As the value of `m` is increased, better approximations are possible. For example, each of `13/4`, `16/5`, `19/6` and `22/7` are in turn better approximations of `\pi`. The line includes the improved flag if the approximation is better than others found thus far.

next best after 22/7

After `22/7`, the next better approximation is at `179/57`.

Out of all the 10,000 approximations, the best one is `355/113`, which is good to 7 digits (6 decimal places).

pi = 3.1415926

355/113 = 3.1415929

I've scanned to beyond `m=1000000` and `355/113` still remains as the only approximation that returns more correct digits than required to remember it.

increasingly accurate approximations

Here is a sequence of approximations that improve on all previous ones.

1 1 1.000000000000 0.681690113816 improved

2 1 2.000000000000 0.363380227632 improved

3 1 3.000000000000 0.045070341449 improved

13 4 3.250000000000 0.034507130097 improved

16 5 3.200000000000 0.018591635788 improved

19 6 3.166666666667 0.007981306249 improved

22 7 3.142857142857 0.000402499435 improved

179 57 3.140350877193 0.000395269704 improved

201 64 3.140625000000 0.000308013704 improved

223 71 3.140845070423 0.000237963113 improved

245 78 3.141025641026 0.000180485705 improved

267 85 3.141176470588 0.000132475164 improved

289 92 3.141304347826 0.000091770575 improved

311 99 3.141414141414 0.000056822190 improved

333 106 3.141509433962 0.000026489630 improved

355 113 3.141592920354 0.000000084914 improved

For all except one, these approximations aren't all good value for your digits.

For example, `179/57` requires you to remember 5 digits but only gets you 3 digits of `\pi` correct (3.14).

Only `355/113` gets you more digits than you need to remember—you need to memorize 6 but get 7 (3.141592) out of the approximation!

You could argue that `22/7` and `355/113` are the only approximations worth remembering. In fact, go ahead and do so.

approximations for large `m` and `n`

It's remarkable that there is no better `m/n` approximation after `355/113` for all `m \le 10000`.

What do we find for `m > 10000`?

Well, we have to move down the values of `m` all the way to 52,163 to find `52163/16604`. But for all this searching, our improvement in accuracy is miniscule—0.2%!

pi 3.141592653589793238

m n m/n relative_error

355 113 3.1415929203 0.00000008491

52163 16604 3.1415923873 0.00000008474

After 52,162 there is a slew improvements to the approximation.

104348 33215 3.1415926539 0.000000000106 208341 66317 3.1415926534 0.0000000000389 312689 99532 3.1415926536 0.00000000000927 833719 265381 3.141592653581 0.00000000000277 1146408 364913 3.14159265359 0.000000000000513 3126535 995207 3.141592653588 0.000000000000364 4272943 1360120 3.1415926535893 0.000000000000129 5419351 1725033 3.1415926535898 0.00000000000000705 42208400 13435351 3.1415926535897 0.00000000000000669 47627751 15160384 3.14159265358977 0.00000000000000512 53047102 16885417 3.14159265358978 0.00000000000000388 58466453 18610450 3.14159265358978 0.00000000000000287

I stopped looking after `m=58,466,453`.

Despite their accuracy, all these approximations require that you remember more or equal the number of digits than they return. The last one above requires you to memorize 17 (9+8) digits and returns only 14 digits of `\pi`.

The only exception to this is `355/113`, which returns 7 digits for its 6.

You can download the first 175 increasingly accurate approximations, calculated to extended precision (up to `58,466,453/18,610,450`).

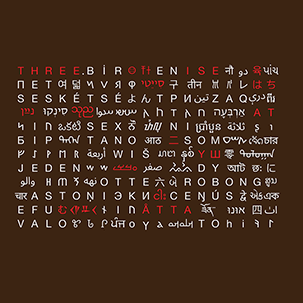

Nasa to send our human genome discs to the Moon

We'd like to say a ‘cosmic hello’: mathematics, culture, palaeontology, art and science, and ... human genomes.

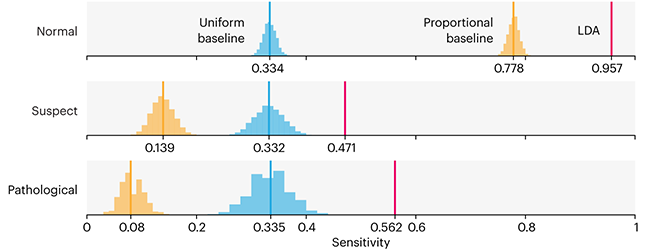

Comparing classifier performance with baselines

All animals are equal, but some animals are more equal than others. —George Orwell

This month, we will illustrate the importance of establishing a baseline performance level.

Baselines are typically generated independently for each dataset using very simple models. Their role is to set the minimum level of acceptable performance and help with comparing relative improvements in performance of other models.

Unfortunately, baselines are often overlooked and, in the presence of a class imbalance5, must be established with care.

Megahed, F.M, Chen, Y-J., Jones-Farmer, A., Rigdon, S.E., Krzywinski, M. & Altman, N. (2024) Points of significance: Comparing classifier performance with baselines. Nat. Methods 20.

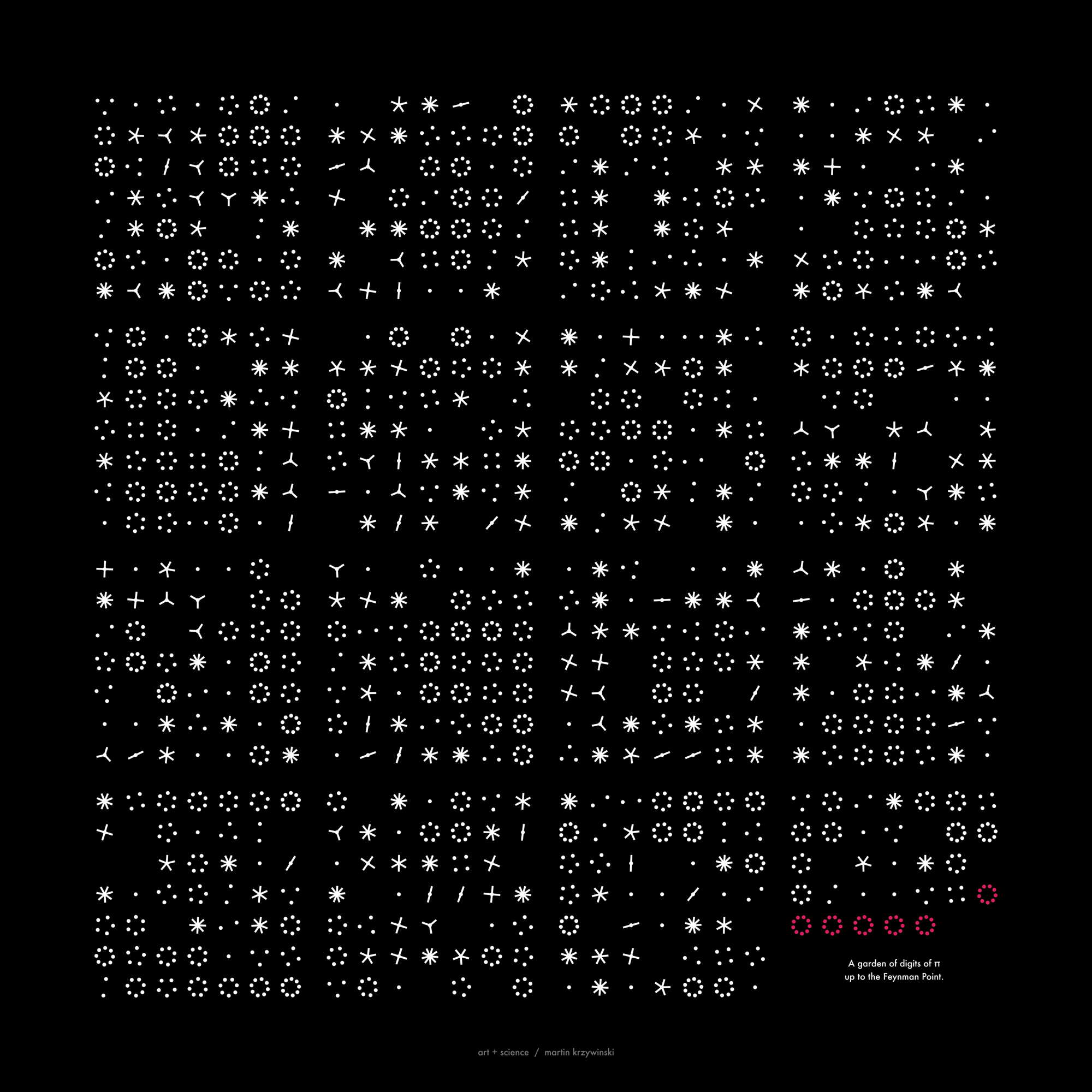

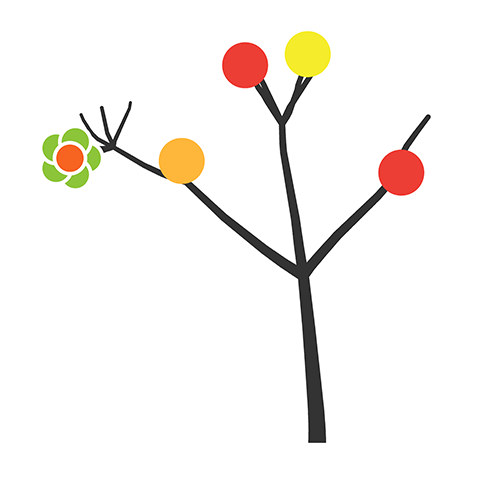

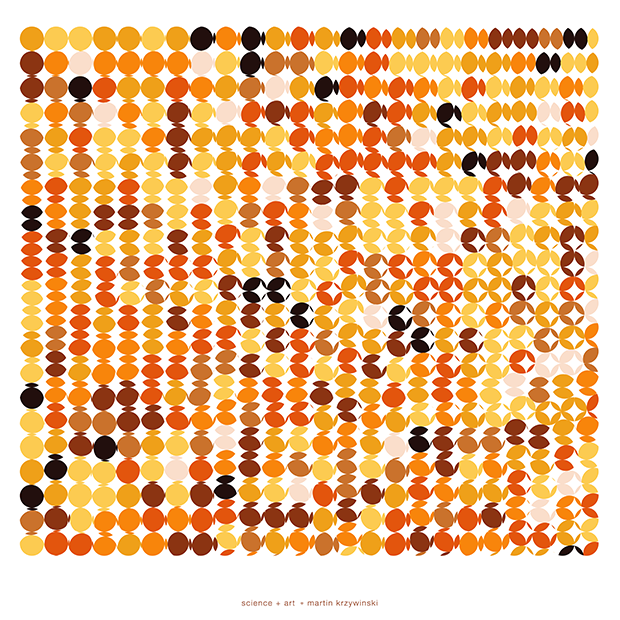

Happy 2024 π Day—

sunflowers ho!

Celebrate π Day (March 14th) and dig into the digit garden. Let's grow something.

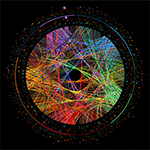

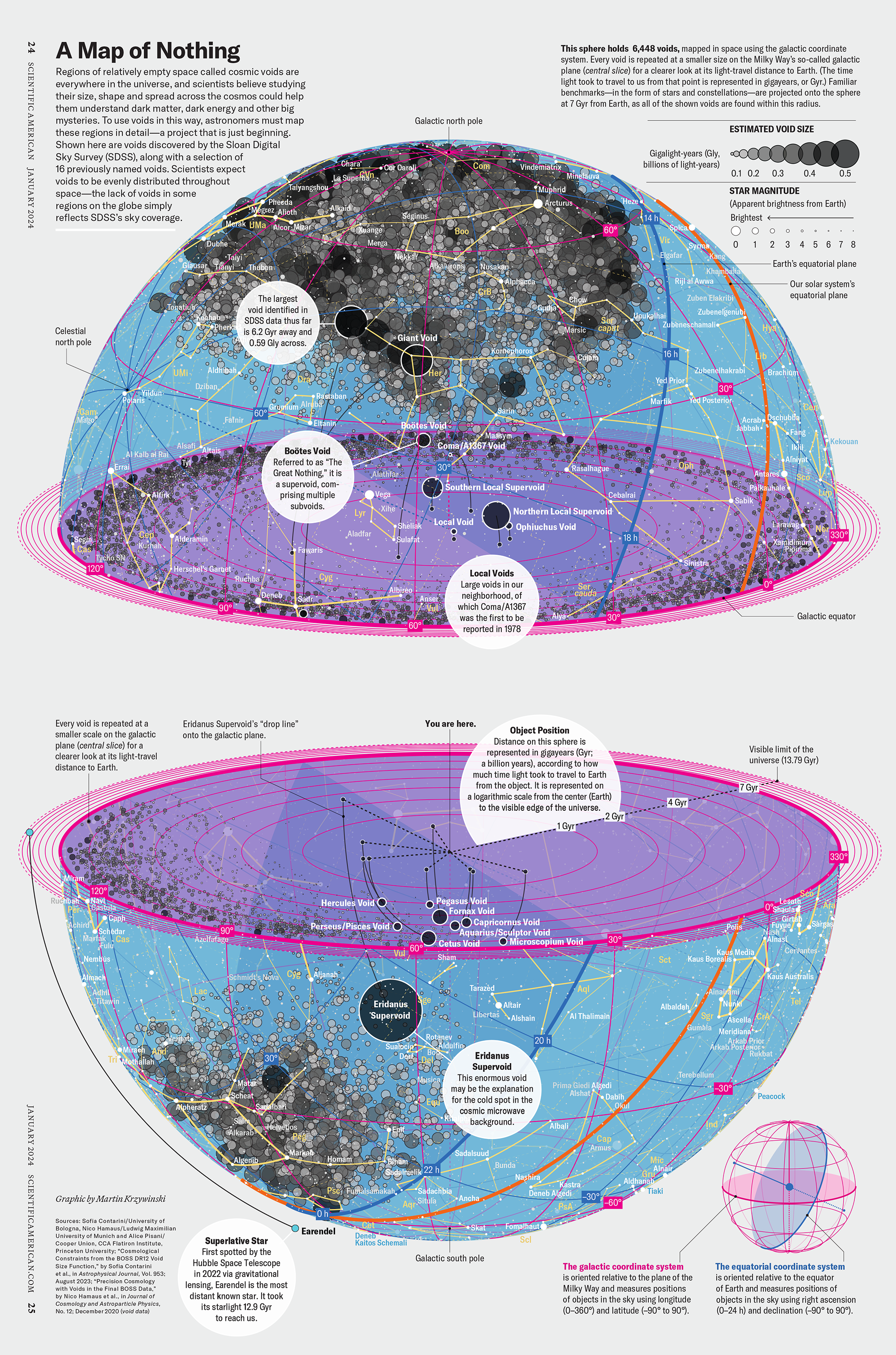

How Analyzing Cosmic Nothing Might Explain Everything

Huge empty areas of the universe called voids could help solve the greatest mysteries in the cosmos.

My graphic accompanying How Analyzing Cosmic Nothing Might Explain Everything in the January 2024 issue of Scientific American depicts the entire Universe in a two-page spread — full of nothing.

The graphic uses the latest data from SDSS 12 and is an update to my Superclusters and Voids poster.

Michael Lemonick (editor) explains on the graphic:

“Regions of relatively empty space called cosmic voids are everywhere in the universe, and scientists believe studying their size, shape and spread across the cosmos could help them understand dark matter, dark energy and other big mysteries.

To use voids in this way, astronomers must map these regions in detail—a project that is just beginning.

Shown here are voids discovered by the Sloan Digital Sky Survey (SDSS), along with a selection of 16 previously named voids. Scientists expect voids to be evenly distributed throughout space—the lack of voids in some regions on the globe simply reflects SDSS’s sky coverage.”

voids

Sofia Contarini, Alice Pisani, Nico Hamaus, Federico Marulli Lauro Moscardini & Marco Baldi (2023) Cosmological Constraints from the BOSS DR12 Void Size Function Astrophysical Journal 953:46.

Nico Hamaus, Alice Pisani, Jin-Ah Choi, Guilhem Lavaux, Benjamin D. Wandelt & Jochen Weller (2020) Journal of Cosmology and Astroparticle Physics 2020:023.

Sloan Digital Sky Survey Data Release 12

Alan MacRobert (Sky & Telescope), Paulina Rowicka/Martin Krzywinski (revisions & Microscopium)

Hoffleit & Warren Jr. (1991) The Bright Star Catalog, 5th Revised Edition (Preliminary Version).

H0 = 67.4 km/(Mpc·s), Ωm = 0.315, Ωv = 0.685. Planck collaboration Planck 2018 results. VI. Cosmological parameters (2018).

constellation figures

stars

cosmology

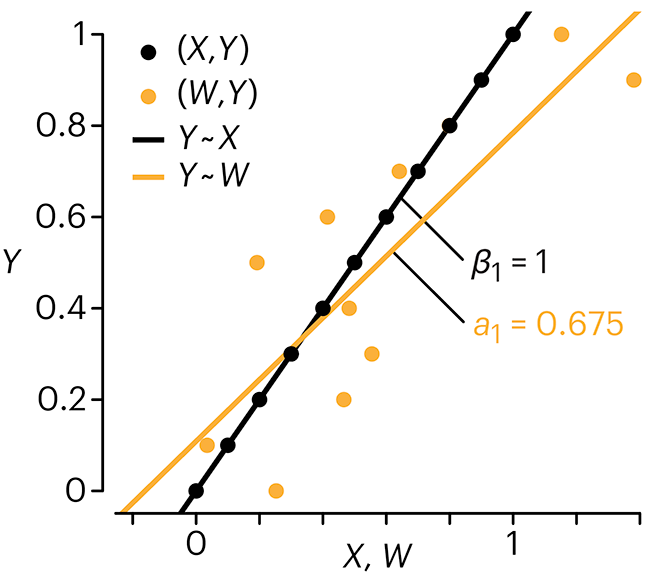

Error in predictor variables

It is the mark of an educated mind to rest satisfied with the degree of precision that the nature of the subject admits and not to seek exactness where only an approximation is possible. —Aristotle

In regression, the predictors are (typically) assumed to have known values that are measured without error.

Practically, however, predictors are often measured with error. This has a profound (but predictable) effect on the estimates of relationships among variables – the so-called “error in variables” problem.

Error in measuring the predictors is often ignored. In this column, we discuss when ignoring this error is harmless and when it can lead to large bias that can leads us to miss important effects.

Altman, N. & Krzywinski, M. (2024) Points of significance: Error in predictor variables. Nat. Methods 20.

Background reading

Altman, N. & Krzywinski, M. (2015) Points of significance: Simple linear regression. Nat. Methods 12:999–1000.

Lever, J., Krzywinski, M. & Altman, N. (2016) Points of significance: Logistic regression. Nat. Methods 13:541–542 (2016).

Das, K., Krzywinski, M. & Altman, N. (2019) Points of significance: Quantile regression. Nat. Methods 16:451–452.

Convolutional neural networks

Nature uses only the longest threads to weave her patterns, so that each small piece of her fabric reveals the organization of the entire tapestry. – Richard Feynman

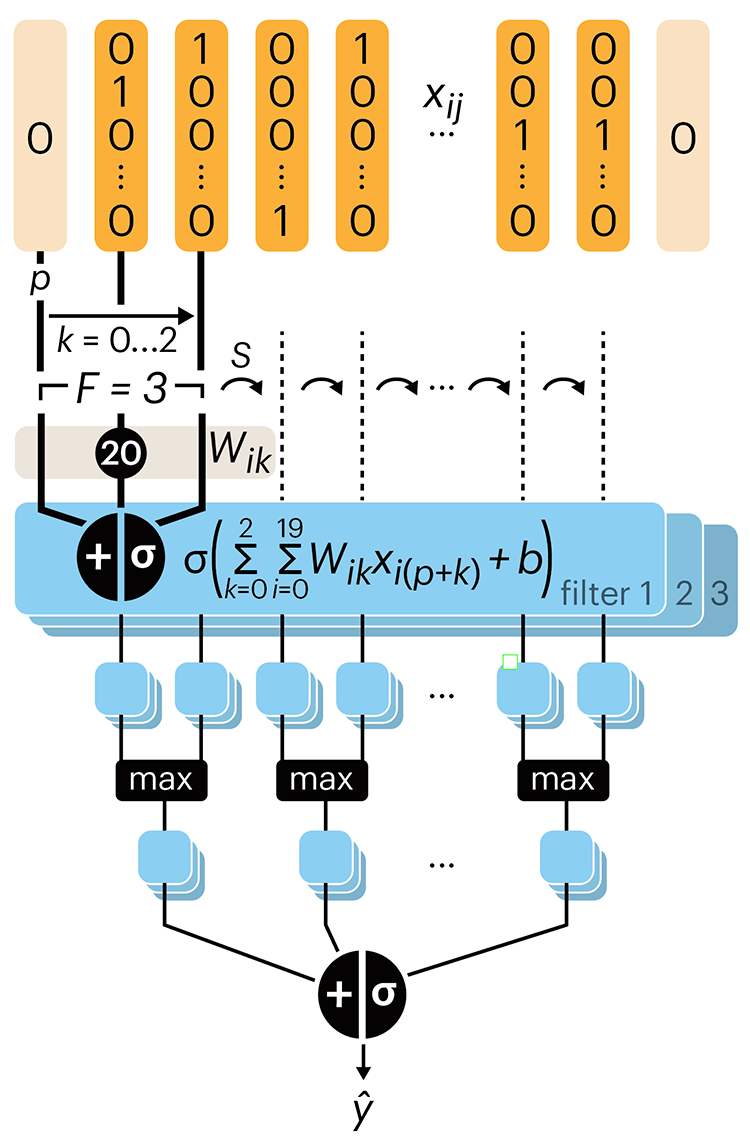

Following up on our Neural network primer column, this month we explore a different kind of network architecture: a convolutional network.

The convolutional network replaces the hidden layer of a fully connected network (FCN) with one or more filters (a kind of neuron that looks at the input within a narrow window).

Even through convolutional networks have far fewer neurons that an FCN, they can perform substantially better for certain kinds of problems, such as sequence motif detection.

Derry, A., Krzywinski, M & Altman, N. (2023) Points of significance: Convolutional neural networks. Nature Methods 20:1269–1270.

Background reading

Derry, A., Krzywinski, M. & Altman, N. (2023) Points of significance: Neural network primer. Nature Methods 20:165–167.

Lever, J., Krzywinski, M. & Altman, N. (2016) Points of significance: Logistic regression. Nature Methods 13:541–542.